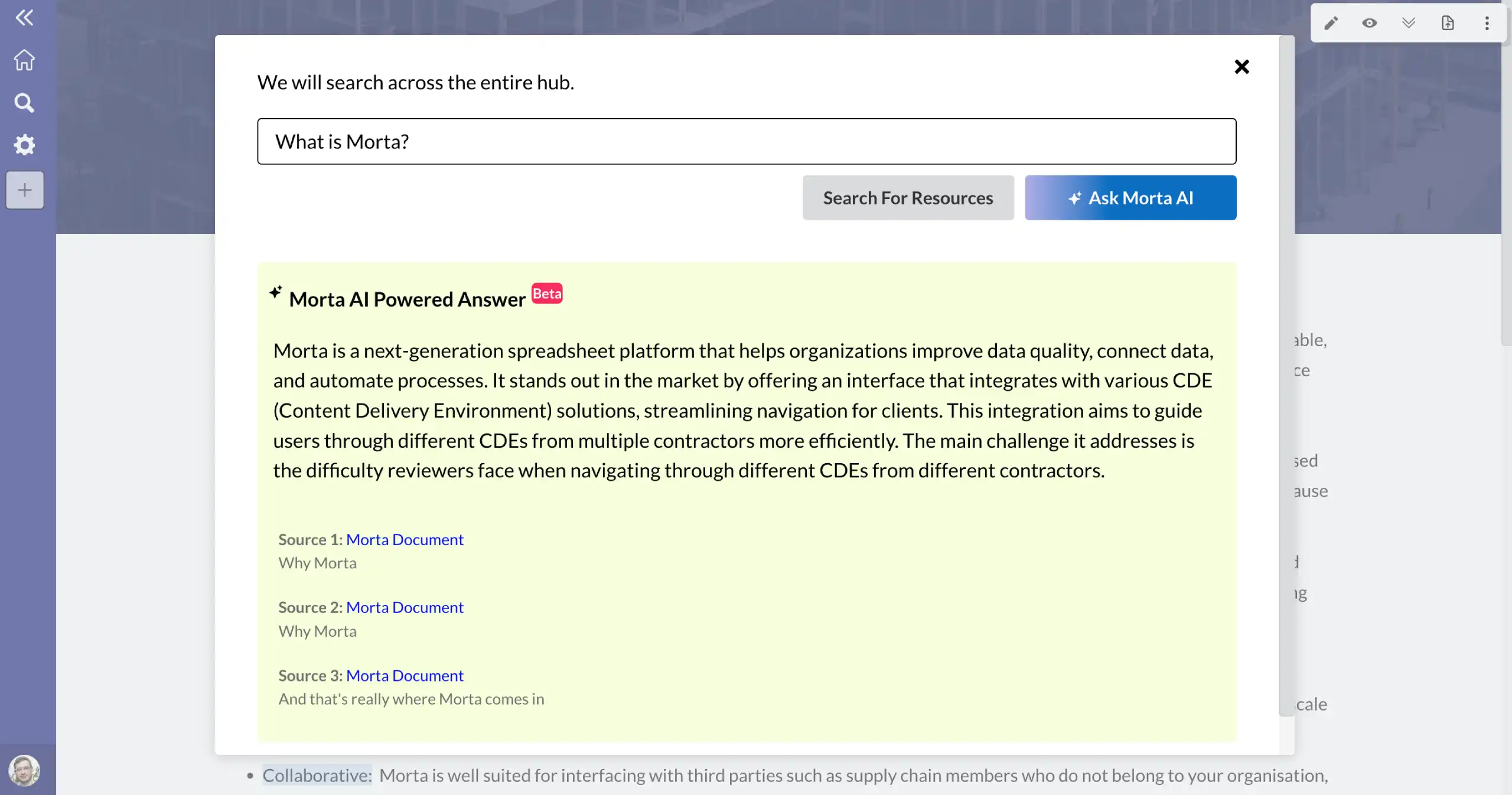

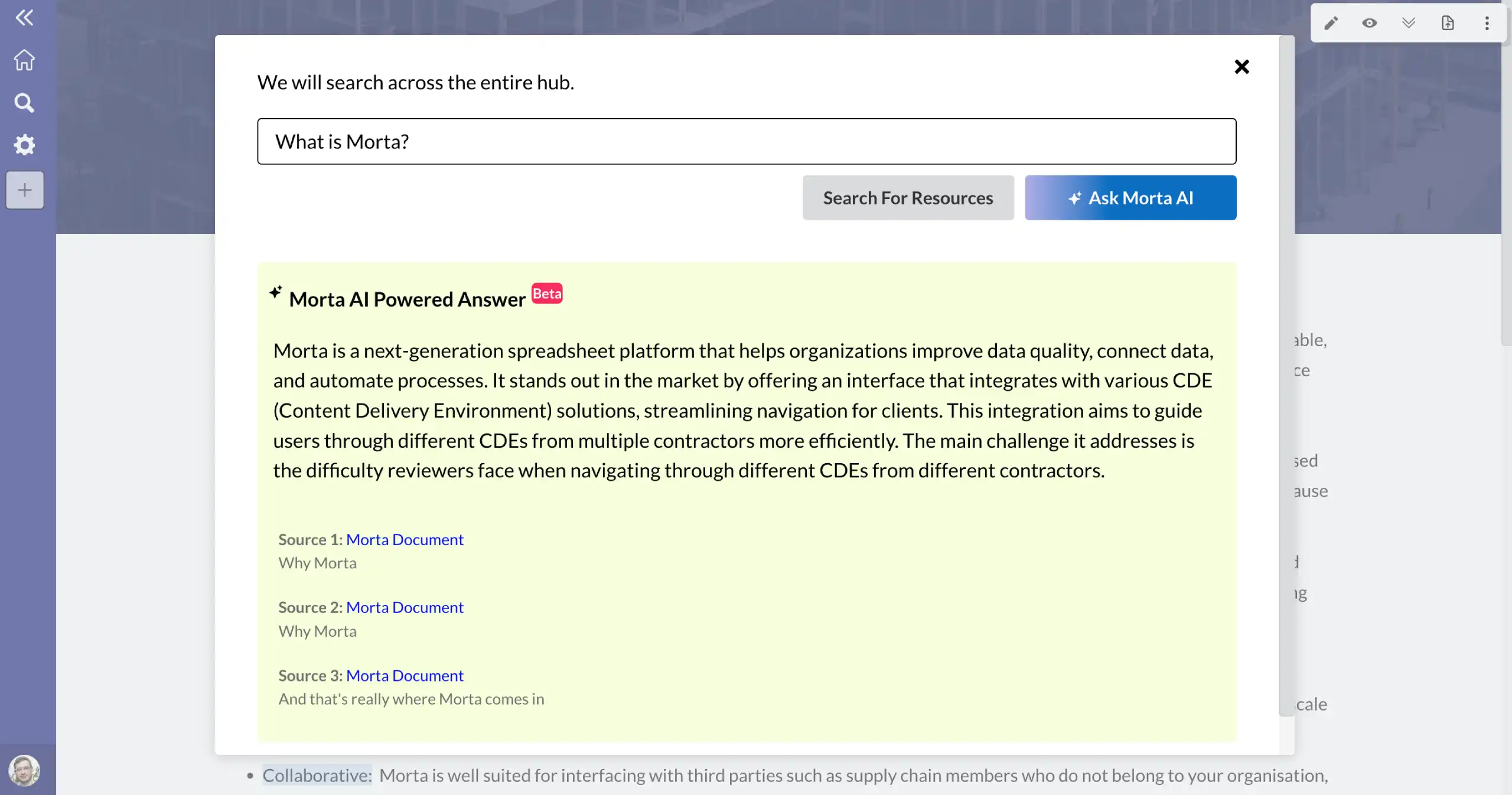

Stop hunting for information and let Morta AI find the answer.

Make sure that all of your project members can find the information they need, when they need it. Morta AI only uses information contained with your documents so there is no risk of incorrect answers.

Get instant answers.

Stop searching around for information in your documents. Morta AI can provide you with the answer you need in seconds.

Save time searching for information

See what questions are being asked

Trained on your documents.

The answers provided are based on the information contained in your documents. No background information is used when answering the questions.

Accurate answers

Automated question answering

Data never leaves Morta servers.

Unlike other companies, Morta does not use OpenAI or any other external services to provide answers. All data stays within Morta and your data is never used to train or improve models.

Save time searching for information

See what questions are being asked

Frequently asked questions.

Common questions about this template and how it works.

How does Morta AI work?

Morta AI uses RAG (Retrieval-Augmented Generation) to provide answers to your questions. RAG is an AI framework that combines the strengths of traditional information retrieval systems (such as search and databases) with the capabilities of generative large language models (LLMs). By combining your document data with LLM language skills, grounded generation is more accurate, up-to-date, and relevant to your specific question.

Is my data used to train the AI model?

No. Morta AI is trained on your documents and does not use any external data sources. Your data is never used to train or improve the AI model.

Which LLM models does Morta use?

Morta uses open-source models to provide answers to your questions. For the embedding phase Morta uses the nomic-embed-text model. For the generation phase Morta uses the mistral model.

Does the AI model use background knowledge?

No. Morta AI only uses the information contained in your documents when answering questions. The LLM is instructed to only give answers based on the provided context - no previous knowledge can be used. This ensures all answers come directly from your documentation.

How do I know the answer is correct?

All answers are fully sourced with three references so you know exactly where the answer has been generated from.

Ready to connect your controls?

Get in touch with our team to see how Morta can drive delivery performance across your projects.